ProdSimulator

ProdSimulator

Stop scoring CVs. Throw candidates into real work scenarios to identify the strong ones early.

Meaningful screening before interviews · Try 3 evaluations for freePolished stories and keywords still tell you very little about how someone operates in a messy real situation.

Reviewing large candidate pools manually is slow, and weak early filters make it easy to miss strong people.

Real signal often appears only in engineer-led interviews, after you've already spent time on weak fits.

Candidates can be prepped, and take-home tasks can be outsourced to AI. Traditional screening keeps losing signal.

So we built a way to measure exactly that.

Paste your job description and let the app prepare a scenario suited to your specific stack, architecture, and constraints.

Candidates get a simple email or direct link that takes them straight into the simulation experience.

They step into a situation with teammates, constraints, and incomplete information. No generic quiz questions, just real decisions.

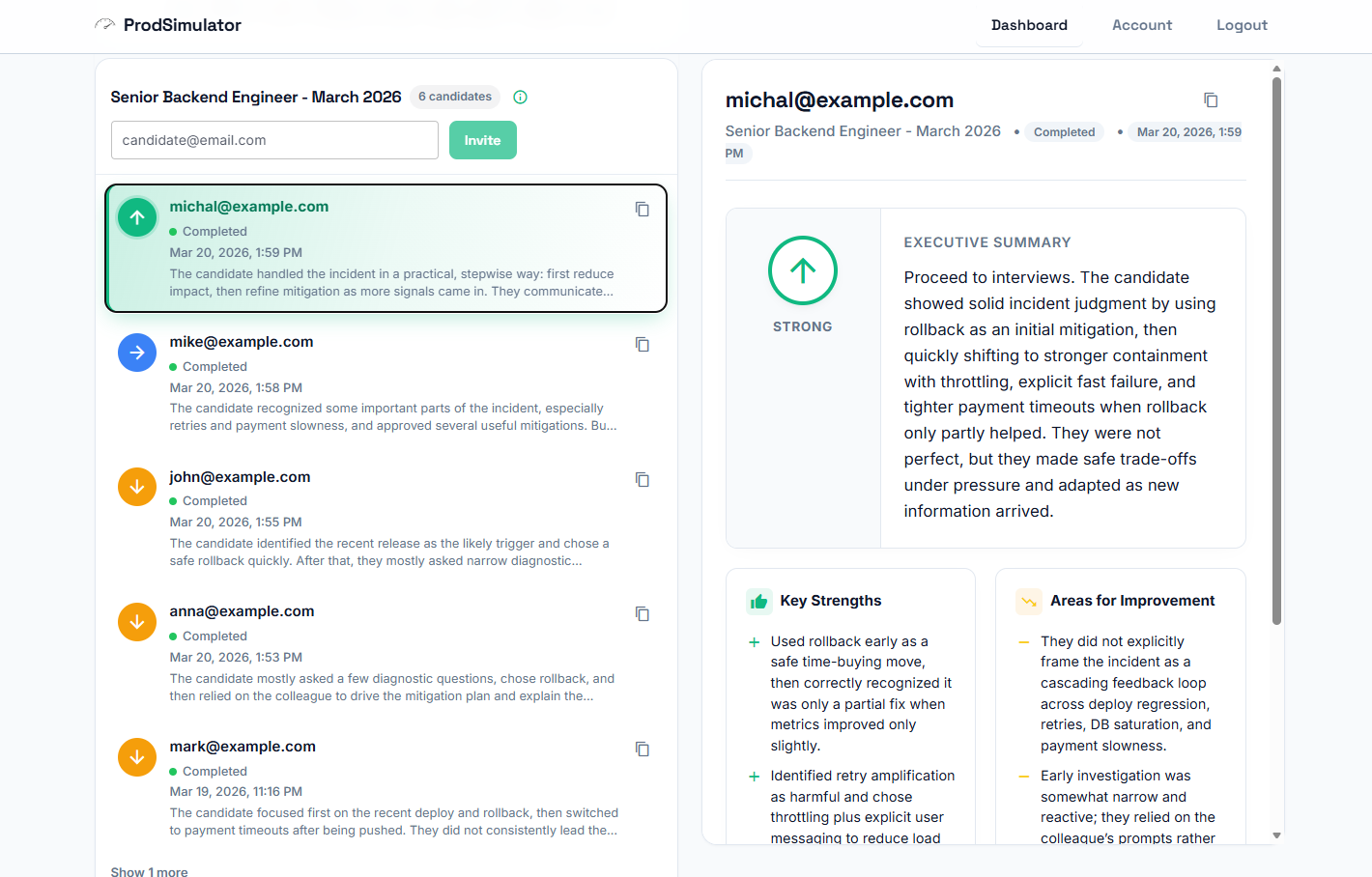

After the scenario, candidates explain their choices. You review how they prioritized, reasoned through uncertainty, and communicated.

You’ve been invited to complete a short production simulation.

The core scenarios are crafted by people who understand how production systems fail, how teams respond, and where real judgment shows up.

While the baseline scenario is robust, we automatically adapt the stack, scale, architecture, and operational context to reflect the role you are actually hiring for.

The value isn't just in asking a question, but in presenting a realistic situation that exposes prioritization and decision quality.

Do they focus on the highest-leverage problem first, or get lost in lower-impact tasks?

Do they reduce ambiguity methodically, or make assumptions before they understand the system?

Can they explain trade-offs, risks, and next steps clearly to teammates and non-engineers?

Do they move quickly without losing sight of system impact, operational risk, and business context?

Not what they claim, but how they operate when the system isn't ideal.

Use it to filter large pools before interviews and identify strong candidates earlier.

Replace CV guessing with observed behavior and avoid spending interview time on weak fits.

Simple interface. Meaningful screening signal.

Bring ProdSimulator into your hiring workflow with onboarding, volume support, and a setup call tailored to your roles.

We will help map scenarios to your roles, show you the recruiter workflow, and set up your first hiring pipeline.

Prefer self-serve? Per-evaluation pricing is still available inside the app.

Come register: you get 3 evaluations for free, and we will give you 50 more for free on the onboarding call.

You can optimize your CV. You can even optimize yourself for interviews.

But you can’t fake engineering when systems get messy.

So I built a way to observe decisions instead of answers.

“The best engineers aren’t the ones who know the most.

They’re the ones who don’t panic when nothing makes sense.”

A simulation is the scenario the candidate goes through (e.g. production incident, trade-off decision).

An evaluation is the result you receive after the candidate completes it.

You only pay for completed evaluations ‐ not for creating or assigning simulations.

No. You only pay when a candidate completes the simulation and produces a valid evaluation.

If they drop off or don’t finish, it doesn’t count.

A valid evaluation means the candidate meaningfully engaged with the scenario and completed the simulation flow.

Incomplete sessions, spam responses, or abandoned attempts are not counted.

ProdSimulator doesn’t give you a “perfect score.”

It shows how a candidate behaves in realistic situations ‐ prioritization, decision-making, communication.

Use it to decide who is worth interviewing, not as a final hiring decision.

Yes. ProdSimulator automatically tweaks scenarios based on your job description. The simulation adapts to reflect the kind of situations relevant to your specific role.

Sure! If you don’t find the evaluations useful, you can request a refund.

No lock-in, no long-term commitment.

Typically 6–10 minutes.

Short enough to complete, long enough to reveal how a candidate thinks.

No. It replaces early screening.

You start interviews with candidates who already demonstrated real-world thinking.

Not easily. Simulations require reasoning in dynamic situations, not memorized answers.

Furthermore, AI-assisted responses are easy to detect and will be flagged as invalid.